![Blog - Best GPU for AI/ML, deep learning, data science in 2024: RTX 4090 vs. 6000 Ada vs A5000 vs A100 benchmarks (FP32, FP16) [ Updated ] | BIZON Blog - Best GPU for AI/ML, deep learning, data science in 2024: RTX 4090 vs. 6000 Ada vs A5000 vs A100 benchmarks (FP32, FP16) [ Updated ] | BIZON](https://bizon-tech.com/i/articles/deeplearning6/1.png)

Blog - Best GPU for AI/ML, deep learning, data science in 2024: RTX 4090 vs. 6000 Ada vs A5000 vs A100 benchmarks (FP32, FP16) [ Updated ] | BIZON

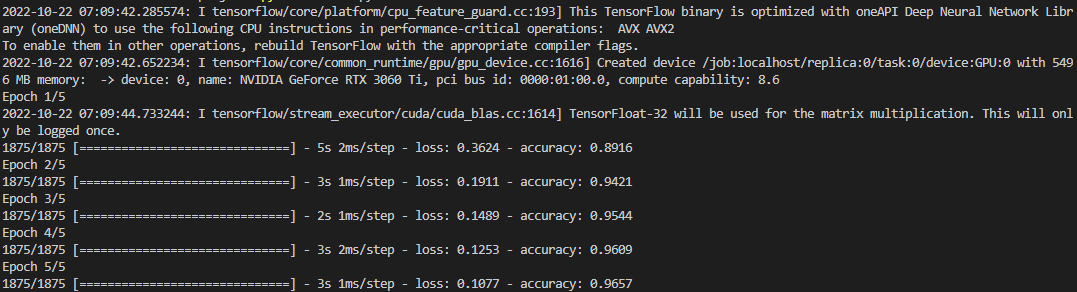

Using TensorFlow on Windows 10 with Nvidia RTX 3000 series GPUs | by Taylr Cawte | Analytics Vidhya | Medium

Using TensorFlow on Windows 10 with Nvidia RTX 3000 series GPUs | by Taylr Cawte | Analytics Vidhya | Medium

How Good is RTX 3060 for ML AI Deep Learning Tasks and Comparison With GTX 1050 Ti and i7 10700F CPU - YouTube

Should I get rtx 3060 or 3070 if I want to do machine learning? Is vram more important or tensor cores more important? - Quora

TensorFlow Performance with 1-4 GPUs -- RTX Titan, 2080Ti, 2080, 2070, GTX 1660Ti, 1070, 1080Ti, and Titan V | Puget Systems

![Blog - Best GPU for AI/ML, deep learning, data science in 2024: RTX 4090 vs. 6000 Ada vs A5000 vs A100 benchmarks (FP32, FP16) [ Updated ] | BIZON Blog - Best GPU for AI/ML, deep learning, data science in 2024: RTX 4090 vs. 6000 Ada vs A5000 vs A100 benchmarks (FP32, FP16) [ Updated ] | BIZON](https://bizon-tech.com/i/articles/deeplearning6/3.png)

Blog - Best GPU for AI/ML, deep learning, data science in 2024: RTX 4090 vs. 6000 Ada vs A5000 vs A100 benchmarks (FP32, FP16) [ Updated ] | BIZON

Is Nvidia RTX 3060 good for beginners in Deep Learning? Crypto Mining Ban, is it going to help? - YouTube

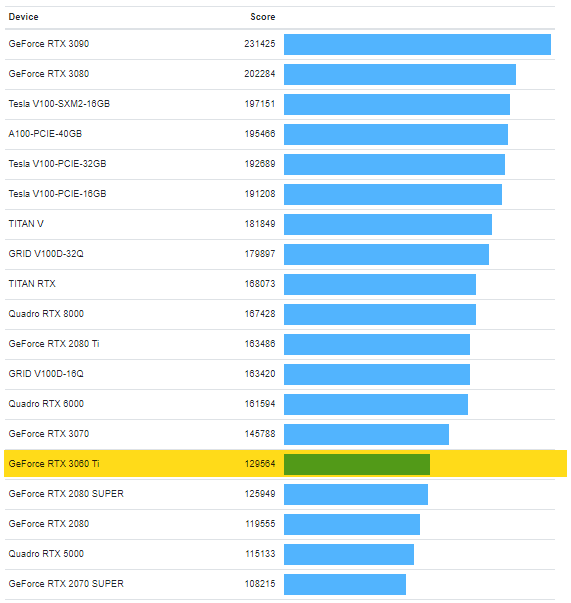

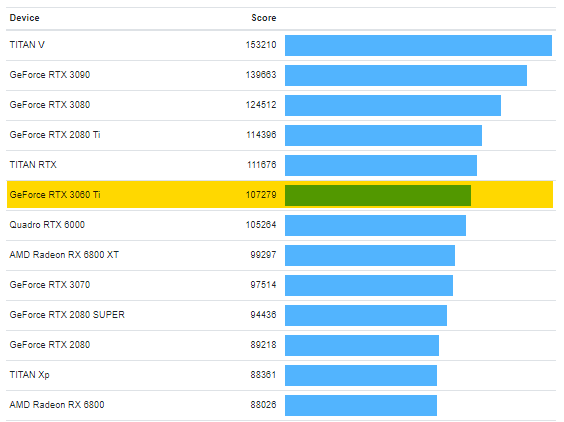

The NVIDIA GeForce RTX 3060 Ti posts strong performances in CUDA, OpenCL and Vulkan benchmarks - NotebookCheck.net News

The NVIDIA GeForce RTX 3060 Ti posts strong performances in CUDA, OpenCL and Vulkan benchmarks - NotebookCheck.net News

NVIDIA GeForce Singapore - Work faster with acceleration of up to 47x TensorFlow with GeForce RTX laptops. 💻 More information: https://nvda.ws/3fBxfH5 | Facebook

.webp?width=800&height=329&name=1zNO8QblyRx7Ugw-CVckANw_36c6bf49585de689216581e09e81a823_800%20(1).webp)